The long, fast march of graphic generative AIs

The pace of improvement in graphic generative artificial intelligence is stunning

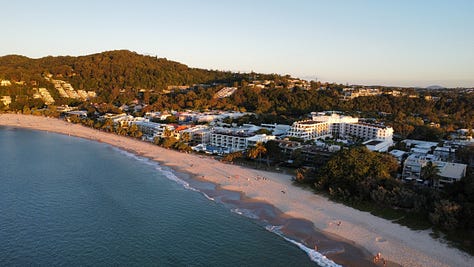

On Saturday 9 July 2022 I was coming to the tail end of my maternal family’s annual Noosa gathering. It had been a good trip, and as the sun set on one of my last full days in Queensland, I took my DJI Mini SE out for a spin at Sunshine Beach, and got some gorgeous shots.

Fate had other plans though, and the following morning I came down with COVID for the first time, and I would spend the next week in a villa at Noosa Lakes Resort after the rest of my Family had left, after moon walking over there with all my stuff.

July 2022 was a very similar world to the one we live in today. It had COVID (but is part of the same post-pandemic era where people are vaccinated and pandemic era restrictions are a thing of the past), the internet and culture were the largely the same (Except twitter now has a different logo). I had the same phone, flew the same drone, and travelled around with the same bag as I do today.

After all, it was only 18 months ago.

But in July 2022, AI was still a whisper hanging around the margins. ChatGPT wouldn’t launch for another 5 months, and while I had played with GPT-3 and found it very impressive, we weren’t anywhere close to the capabilities of GPT-4. Moreover, in July 2022 if I talked to anyone at my family gathering (or even most of my colleagues at work) about generative AI, they’d give me a blank look.

In some ways the biggest name in generative artificial intelligence in July 2022 wasn’t anything from OpenAI at all, but Midjourney, which had been featured on the front cover of the Economist the previous month:

I had heard about Midjourney from a friend that had been playing around with it in the Closed Beta, and on 11 July 2022, the day before the closed Beta ended, I joined the fun, and as I lay wracked with COVID began seeing what the generative AI program could do for me.

My very first prompt was “A spaceship flies over a farm, solarpunk, studio ghibli style, bright, daytime, vibrant colours, landscape” and these were the first outputs I got from that prompt:

This was Midjourney V2 and while it wasn’t exactly bad by what you could expect at the time (all of this seemed like magic), it was definitely bad by the standards of December 2023.

It had a distinctly Midjourney Style that it would default to, that always seemed like it was straight out of a surrealist dream sequence - not bad exactly, but limited, full of haunted figures with indistinct and asymmetrical faces. If you whacked in quite simple prompts, you’d get things like this (best case scenario):

Moving it away from that distinctly Midjourney Style was the work of careful prompt crafting, which could be done, but even then the results were often uncanny - the kind of scenes that looked good from a distance but upon closer inspection didn’t quite make sense.

My bout of COVID came to an end, and I left Midjourney behind. It had been an engaging way to spend time while sick, but the practical uses at the time was limited. While Midjourney V2 could occasionally produce works of stunning beauty, generating them was a matter of patience and thousands of iterations.

I came back to Midjourney in April 2023. April 2023 was a different world to July 2022. Chat GPT had taken. the world by storm, and GPT-4 had launched the previous month. Where in July 2022 no one had heard of generative AI, by April 2023 everyone had, and every government in the world was hastily rewriting their AI strategies, and considering how to regulate it.

I came back to Midjourney for a different reason though - I had a Dungeons and Dragons one shot to organise. V5 had just launched about two weeks prior, and I had heard good things. Bringing settings and characters to life was always my main interest in graphic AIs, and it looked like the technology was now at a level of maturity to make it viable.

And so as I set out to bring Revelle, my magical steampunk 19th century city closely inspired by Paris to life:

By stark contrast with V2, V5 was actually good and could be used to create any number of images easily, without the large number of duds that V2 entailed. Sure the usual AI quirks were still there - hands would have too many fingers, objects would merge together, but it was night and day with the models of even 9 months earlier. In particular V5 could do things that are quite difficult for AIs to do - like creating portraits of characters from non-existent races.

Since then, I’ve been using Midjourney semi regularly for various projects, including my Birthday party poster in May 2023, which was a more complicated affair also involving Canva, Topaz Gigapixel, and Officeworks.

Earlier this month of course V6 of Midjourney launched, and I’ve returned to playing around with it. One of the things that previous versions of Midjourney had struggled with was sailing ships - they wouldn’t know how everything fit together, and what you ended up with was a a mess of rigging, sails and masts, and this was one of the first I got out of the gate:

With many previous versions of Midjourney, careful prompt crafting was required to bring ideas to life, carefully including styles, features, and other traits in order to get the best product, and many iterations. One of the striking things about V6 is how unnecessary this is, very simple prompts (like the one above) produce excellent results reliably off the bat, and further specification in the prompt simply refines the composition of the image, rather than the quality.

https://twitter.com/HowardFMaclean/status/1739202464505807350

So when I decided to put my favourite poem on a poster featuring a tall ship in a storm, it only took a relatively small number of prompt tweaks (to lower the lighting and zoom out a little bit), to get a good background, a lot less work than the Ozymandias poster 8 months before.

A lot of our conversations about AI are dominated by the questions posed by the prospect of general artificial intelligence, and the philosophical, regulatory, economic and other big questions posed by the emergence of this kind of capability. Over the course of 2023, further advances in the capabilities of text based models (like GPT-4, PaLM) quickly became the topic of if we should rather than if we could.

And similarly, the focus on the developer of these high end text based models (OpenAI, Google, Anthropic) quickly turned to how to curate the output of their models via reinforcement learning and other fine tuning, especially after the escapades of the early version of GPT-4, known as Sydney. It’s a common line of thinking that efforts to sanitise output come with inherent trade offs with raw capability, which has led to frequent complaints that these models have been ‘lobotomised’ following release. The recent civil war at OpenAI was sparked in part by a tech demonstration, so these issues are unlikely to go away.

But graphic AIs, that output in images have fewer of these kind of issues, and the pace of advancement is fast and getting better all the time, without the same kind of issues that plague text based models (although they have their own - people for instance are by default white and very physically attractive). It’s been a series of clear, unqualified advances in technology over the past two years. Interesting things have also been happening at DALL-E 3, Microsoft Designer, and Adobe Firefly as well, I’m just not as familiar with those.

Right now, it’s a tool that you can just use to bring ideas and images to life. Which I recommend that you do. What images do you have bouncing around your head but don’t have the artistic skill to put to screen? Now you can get them out.

Happy creating, a merry christmas and a bracing new year.